Surface code

Topological quantum error correcting code

From Wikipedia, the free encyclopedia

The surface code is a topological quantum error correcting code, and an example of a stabilizer code, defined on a two-dimensional spin lattice.[1] The first type of surface code introduced by Alexei Kitaev in 1997 was the toric code, which gets its name from its periodic boundary conditions, giving it the shape of a torus. These conditions give the model translational invariance, which is useful for analytic study. The toric code is the simplest and most well studied of the quantum double models.[2] It is also the simplest example of topological order—Z2 topological order (first studied in the context of Z2 spin liquid in 1991).[3][4] The toric code can also be considered to be a Z2 lattice gauge theory in a particular limit.[5]

However, on many quantum computation platforms, experimental realization of a surface code is much easier if the code can be embedded on a 2D plane. This motivated the design of another type of surface code with open boundary conditions, the planar code.[6] As of 2025, Google Quantum AI has implemented a distance-7 planar code on their newest generation of superconducting quantum processors, Willow processor, demonstrating a below-threshold physical error rate.[7]

Definition

The surface code is defined on a two-dimensional lattice, usually chosen to be the square lattice. Below, we will first illustrate the basic concept with the toric code, where the lattice has periodic boundary conditions, i.e., the top boundary is connected to the bottom and the left boundary to the right. Topologically, this is equivalent to defining the lattice on a torus.

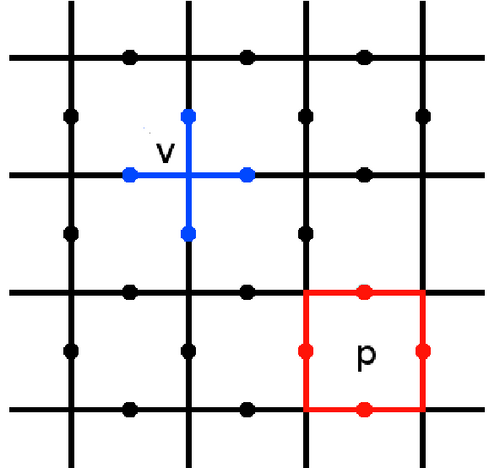

A qubit is located on each edge of the lattice. For a d × d lattice, there are d2 horizontal edges and d2 vertical edges, thus 2d2 qubits in total. Stabilizer operators are defined on the qubits around each vertex v and plaquette (face) p of the lattice as follows:

Here denotes the edges touching the vertex v, and denotes the edges surrounding the plaquette . The code space of the toric code is the subspace for which all stabilizers act trivially, hence for any state in this space it holds that

For the toric code, this space is four-dimensional, and so can be used to store two qubits of quantum information. This can be proven by considering the number of independent stabilizer operators: For a d × d lattice, there are d2 vertex stabilizers and d2 plaquette stabilizers, but the product of all vertex stabilizers is I and so is the product of all plaquette stabilizers. Therefore there are 2d2 − 2 independent stabilizers, leaving 2 qubits worth of degrees of freedom.

The occurrence of errors will usually move the state out of the code space, resulting in vertices and plaquettes for which the above condition does not hold. Specifically, a Pauli Z error on qubit i flips the two vertex stabilizers Av such that (the endpoints of the edge i), and a Pauli X error on qubit i flips the two plaquette stabilizers Bp such that (the plaquettes on either side of the edge i). The positions of these violations is the syndrome of the code, which can be used for error correction.

The unique nature of topological codes such as the surface code is that stabilizer violations can be interpreted as quasiparticles. Specifically, if the code is in a state such that , a quasiparticle known as an e anyon can be said to exist on the vertex v. Similarly a violation of some is associated with an m anyon on the plaquette p. The code space, with no stabilizer violation, corresponds to the anyonic vacuum. The above-mentioned fact that the product of all vertex (resp. plaquette) stabilizers is I means that the number of e (resp. m) anyons on a toric code is always even.

A single-qubit Z error can be associated with an edge, and creates a pair of e anyons at both endpoints of that edge. However, two e anyons at the same location will annihilate each other, so a Z error can also effectively move an e anyon along an edge, allowing e anyons to be transported on the lattice. If the initial pair of anyons end up meeting each other and annihilating, then their paths form a loop.

- If the loop is topologically trivial, then it can be written as a combination of plaquettes (Z stabilizer generators) on the lattice. Therefore the loop represents a Z stabilizer of the code, and has no effect on the stored information. The annihilation of the anyons, in this case, corrects all of the errors involved in their creation and transport.

- However, if the loop is topologically non-trivial, then it represents a non-trivial logical operator. Although re-annihilation of the anyons returns the state to the code space, it also implements a logical operation on the stored information. The errors, in this case, are therefore not corrected but consolidated.

- On a torus, there are two independent topologically non-trivial loops: One looping horizontally on the toric code lattice, one looping vertically. These can be identified with the Z operators of the two logical qubits encoded in the toric code.

By considering the dual graph of the lattice, it can be seen that the above paragraph also applies to X errors and m anyons. Note that the horizontal Z operator and the vertical X operator belong to the same qubit, and vice versa. This ensures the correct commutation relations between logical operators.

Error correction

Consider the noise model for which bit and phase errors occur independently on each qubit, both with probability p. When p is low, this will create sparsely distributed pairs of anyons which have not moved far from their point of creation. Correction can be achieved by identifying the pairs that the anyons were created in (up to an equivalence class), and then re-annihilating them to remove the errors. As p increases, however, it becomes more ambiguous as to how the anyons may be paired without risking the formation of topologically non-trivial loops. This gives a threshold probability, under which the error correction will almost certainly succeed. Through a mapping to the random-bond Ising model, this critical probability has been found to be around 11%.[8]

Other error models may also be considered, and thresholds found. In all cases studied so far, the code has been found to saturate the Hashing bound. For some error models, such as biased errors where bit errors occur more often than phase errors or vice versa, lattices other than the square lattice must be used to achieve the optimal thresholds.[9][10]

Loss errors have also been studied using statistical-physics mappings; Masayuki Ohzeki estimated error thresholds for surface codes with lost qubits by analyzing related spin-glass models.[11]

These thresholds are upper limits and are useless unless efficient algorithms are found to achieve them. The most well-used algorithm is minimum weight perfect matching.[12] When applied to the noise model with independent bit and flip errors, a threshold of around 10.5% is achieved. This falls only a little short of the 11% maximum. However, matching does not work so well when there are correlations between the bit and phase errors, such as with depolarizing noise.

Open boundary conditions

When adapting the surface code to open boundary conditions, special boundary behaviors arise. As a motivating example, consider defining a surface code in the same way as above, but on an n × n square grid graph. Some vertices on the boundary will have degree 3 instead of 4 (and the corner vertices will have degree 2), so there will be some weight-3 (and weight-2) X stabilizers.

The most important characteristic of such an open boundary is that a Pauli X error no longer necessarily flips two plaquette stabilizers. An edge on the boundary only has one adjacent plaquette, and thus an X error on the corresponding qubit will only flip a single , or in the language of anyons, only create or annihilate a single m anyon. One could say that this type of code boundary (known as a smooth boundary) is a source and sink for m anyons.

In a surface code with open boundaries, in addition to true loops, one needs to consider paths that start and end at a boundary, which are usually specific to anyon types. In our n × n grid graph example, an m anyon can be created at any location on the boundary, move across the grid, and be annihilated at any other location on the boundary. However, since there is only one type of boundary, all these paths are topologically trivial. For example, if an m anyon is created somewhere in the middle of the top boundary, moves one step horizontally, then is annihilated again by the top boundary, then its path corresponds to one of the weight-3 X stabilizers mentioned above. Meanwhile, e anyons can only move within the boundary, so all e anyon loops are topologically trivial too. This indicates that this code does not encode any logical qubit, which can be verified by counting qubits and stabilizers: There are 2n(n − 1) qubits (lattice edges), n2 − 1 independent vertex stabilizers, and (n − 1)2 independent plaquette stabilizers (due to the boundary, the product of all plaquette stabilizers is no longer I and all of them are independent), and 2n(n − 1) − (n2 − 1) − (n − 1)2 = 0.

To design an open-boundary surface code with a non-trivial codespace, it is necessary to use another type of boundary, the rough boundary which acts as the dual of the smooth boundary. To create a rough boundary, we start from the smooth boundary and remove the edges (qubits) on the boundary that only neighbor one plaquette, but keep those plaquettes as weight-3 Z stabilizers. The vertex stabilizers on the original boundary are now weight-1 and no longer properly commute with the modified plaquette stabilizers, so those vertex stabilizers are removed too, leaving some "dangling" edges on the lattice (thus the name "rough"). The result is a boundary that acts as a source and sink for e anyons.

Now consider a lattice with smooth top and bottom boundaries, and rough left and right boundaries. Such a lattice defines an (unrotated) planar code. An m anyon moving from the top boundary to the top boundary is still a topologically trivial path, but one moving from the top boundary to the bottom boundary is no longer topologically trivial, because the anyon could not have exited at the left or right boundary anymore. Similarly, the topologically non-trivial path for e anyons is one moving from the left boundary to the right boundary. If the original grid has d rows and d + 1 columns of vertices (before removing the vertex stabilizers on the left and right boundaries), then both types of topologically non-trivial paths have minimum length d, indicating that the code encodes a single logical qubit with code distance d. The number of logical qubits can again be checked by counting stabilizers: (d2 + (d − 1)2) − d(d − 1) − d(d − 1) = 1 (now the vertex stabilizers are all independent too, due to the "dangling edges" that are only part of one vertex stabilizer).

Rotated planar code

The rotated planar code is a variant of the planar code that removes almost half of the physical qubits without affecting the code distance. A distance-d rotated planar code has d2 physical qubits, compared to d2 + (d − 1)2 for the unrotated code. The four corners of the unrotated planar code lattice are cut off along diagonal lines, creating new boundaries. The resulting lattice is still in the shape of a square, but rotated by 45°, thus the name.

Conceptually, a surface code can encode a logical qubit with code distance d as long as it has four boundaries with alternating types (smooth or rough), and the distance between opposing boundaries (i.e., boundaries of the same type) along lattice edges (Manhattan distance for the square lattice) is at least d. This condition holds for the rotated square lattice when these four boundaries coincide with the four sides of the rotated square. For example, the northeast and southwest boundaries may be smooth boundaries while the northwest and southeast boundaries are rough boundaries. Diagonal smooth and rough boundaries have weight-2 X and Z stabilizers respectively.

A detailed description of the layout of the rotated planar code is more easily done by rotating the coordinate system by 45°. In this orientation, the d2 qubits are located on the vertices of a d × d square lattice, and both Z and X stabilizers are located on the plaquettes of this rotated lattice, with the two types in a checkerboard pattern. Furthermore, some weight-2 Z (resp. X) stabilizers exist on the left and right (resp. top and bottom) boundaries of the lattice, with one boundary stabilizer on every other boundary edge. The total number of stabilizers is (d − 1)2 + 4(d − 1) / 2 = d2 − 1.

Compared with an unrotated planar code with the same number of physical qubits, a rotated planar code can increase the code distance d by a factor of approximately . However, there are also many more ways that an anyon can traverse from one boundary to the opposite boundary in d steps, resulting in a larger number of minimum-weight error mechanisms. This may even cause the logical error rate of the rotated code to be higher than the unrotated code with the same number of qubits, despite the larger code distance. Regardless, the rotated planar code prevails in the low-error regime where the physical error rate is significantly below the threshold.[13]

Quantum computation

The means to perform quantum computation on logical information stored within the surface code has been considered, with the properties of the code providing fault-tolerance. It has been shown that extending the stabilizer space using 'holes', vertices or plaquettes on which stabilizers are not enforced, allows many qubits to be encoded into the code. However, a universal set of unitary gates cannot be fault-tolerantly implemented by unitary operations[clarification needed] and so additional techniques are required to achieve quantum computing. For example, universal quantum computing can be achieved by preparing magic states via encoded quantum stubs called tidBits used to teleport in the required additional gates when replaced as a qubit. Furthermore, preparation of magic states must be fault tolerant, which can be achieved by magic state distillation on noisy magic states. A measurement based scheme for quantum computation based upon this principle has been found, whose error threshold is the highest known for a two-dimensional architecture.[14][15]

Hamiltonian and self-correction

Since the stabilizer operators of the surface code are quasilocal, acting only on spins located near each other on a two-dimensional lattice, it is not unrealistic to define the following Hamiltonian,

The ground state space of this Hamiltonian is the stabilizer space of the code. Excited states correspond to those of anyons, with the energy proportional to their number. Local errors are therefore energetically suppressed by the gap, which has been shown to be stable against local perturbations.[16] However, the dynamic effects of such perturbations can still cause problems for the code.[17][18]

The gap also gives the code a certain resilience against thermal errors, allowing it to be correctable almost surely for a certain critical time. This time increases with , but since arbitrary increases of this coupling are unrealistic, the protection given by the Hamiltonian still has its limits.

The means to make a surface code into a fully self-correcting quantum memory is often considered. Self-correction means that the Hamiltonian will naturally suppress errors indefinitely, leading to a lifetime that diverges in the thermodynamic limit. It has been found that this is possible in the toric code only if long range interactions are present between anyons.[19][20] Proposals have been made for realization of these in the lab [21] Another approach is the generalization of the model to higher dimensions, with self-correction possible in 4D with only quasi-local interactions.[22]

Generalizations

It is possible to define similar codes using higher-dimensional spins. These are the quantum double models[23] and string-net models,[24] which allow a greater richness in the behaviour of anyons, and so may be used for more advanced quantum computation and error correction proposals.[25] These not only include models with Abelian anyons, but also those with non-Abelian statistics.[26][27][28]

Experimental progress

The most explicit demonstration of the properties of the toric code has been in state based approaches. Rather than attempting to realize the Hamiltonian, these simply prepare the code in the stabilizer space. Using this technique, experiments have been able to demonstrate the creation, transport and statistics of the anyons[29][30][31] and measurement of the topological entanglement entropy.[31] More recent experiments have also been able to demonstrate the error correction properties of the code.[32][31]

For realizations of the toric code and its generalizations with a Hamiltonian, much progress has been made using Josephson junctions. The theory of how the Hamiltonians may be implemented has been developed for a wide class of topological codes.[33] An experiment has also been performed, realizing the toric code Hamiltonian for a small lattice, and demonstrating the quantum memory provided by its degenerate ground state.[34]

Other theoretical and experimental works towards realizations are based on cold atoms. A toolkit of methods that may be used to realize topological codes with optical lattices has been explored, [35] as have experiments concerning minimal instances of topological order.[36] Such minimal instances of the toric code has been realized experimentally within isolated square plaquettes.[37] Progress is also being made into simulations of the toric model with Rydberg atoms, in which the Hamiltonian and the effects of dissipative noise can be demonstrated.[38][39] Experiments in Rydberg atom arrays have also successfully realized the toric code with periodic boundary conditions in two dimensions by coherently transporting arrays of entangled atoms.[40]

As of 2025, Google Quantum AI has implemented the rotated planar code for up to code distance 7 on their newest generation of superconducting quantum processors, Willow, demonstrating a logical error suppression factor Λ slightly larger than 2 when the code distance is increased by 2, indicating below-threshold behavior.[7]